Insights / Blog / EDC

Switching to a New EDC System: A Strategic Guide for Modern Clinical Trials

- Abriti Rai

- March 24, 2026

On this Page

- Summary

- Spot the Breaking Point: When Legacy EDCs Fail Modern Trials

- What a Modern EDC Migration Actually Looks Like

- What to Look for When Selecting a New EDC System

- Preparing the Organization for the new EDC System

- Measuring Success after Switching to a New EDC System

- Closing Perspective

- Summary

- Spot the Breaking Point: When Legacy EDCs Fail Modern Trials

- What a Modern EDC Migration Actually Looks Like

- What to Look for When Selecting a New EDC System

- Preparing the Organization for the new EDC System

- Measuring Success after Switching to a New EDC System

- Closing Perspective

Summary

Clinical trials move at breakneck speed in 2026, with adaptive designs, global portfolios, and data from wearables, ePROs, imaging, and labs overwhelming legacy Electronic Data Capture (EDC) systems. These platforms, built in simple times, now force workarounds that delay database locks, inflate costs, and obscure insights. Risk linked with data migration, regulatory validation, team disruptions, and live study continuity often makes the switch in EDC systems daunting.

This guide arms sponsors and CROs with a roadmap. We'll pinpoint when to switch, dismantle migration myths, and spotlight AI as the force multiplier turning EDCs from passive vaults into intelligent trial engines

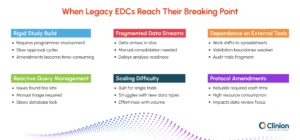

Spot the Breaking Point: When Legacy EDCs Fail Modern Trials

Most EDC systems are implemented to solve the needs of a specific point in time. As organizations expand into global programs, manage multiple concurrent trials, and adopt more adaptive protocol models, expectations placed on the EDC grow. Eventually, the gap between what the system was designed to do and what the organization needs it to do becomes operationally significant.

The following failure patterns are among the most common indicators that a system has reached its limits.

Rigid Study Build Models Delay Trial Startups

Legacy architectures require programmer intervention for every form, edit check, or derivation, followed by sequential approvals. In an environment where protocols average more than 150 amendments over their lifecycle, the overhead becomes a meaningful source of delay. Database build timelines can extend by eight to twelve weeks, and mid-study changes consume resources that teams cannot always spare.

Fragmented Data Streams

Modern trials generate data from multiple sources simultaneously. Legacy EDCs were not designed to ingest this variety natively. As a result, teams receive data in different formats and must manually consolidate it before analysis can begin. This reconciliation work introduces error risk and delays downstream review cycles.

Dependence on External Tools

When an EDC cannot support a required function, teams adapt by routing work through spreadsheets, BI dashboards, or custom scripts. These workarounds are often effective in the short term, but they move critical review steps outside validated environments. Version control becomes difficult, audit trails become fragmented, and regulatory exposure increases over time.

Reactive Query Management

Traditional query workflows rely on teams reviewing large volumes of flagged data after entry and triaging issues manually. This retrospective approach slows resolution cycles. On average, reactive query management extends study timelines by approximately 25% compared to continuous review models.

Difficulty Scaling Across Studies

Systems designed for single-study execution often cannot support expanding portfolios or diverse data types such as genomics, imaging, and continuous device feeds. As pipelines grow, organizations face performance bottlenecks and must rely on additional integrations that add complexity rather than cohesion.

Protocol Amendments as a Resource Drain

Mid-study protocol changes are particularly costly in legacy environments. Reconfiguration, revalidation, and downstream dataset updates can absorb up to 40 % of biostatistics and data management effort during amendment periods, a capacity that would otherwise be directed toward data quality and analysis.

The decision to switch is rarely made because a system has failed outright. It is most often made because the accumulated cost of workarounds has become greater than the cost of change.

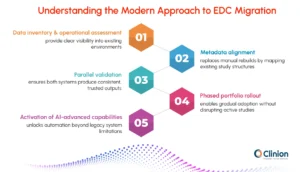

What a Modern EDC Migration Actually Looks Like

For organizations that have attempted system migrations in the past, the experience may have been disruptive. Data was recreated manually, validation timelines were long, active studies were paused, and teams required significant retraining. These experiences have shaped a reasonable reluctance to consider switching again.

The methods used to migrate EDC systems have evolved considerably. Understanding how modern migrations work is an important part of assessing whether a switch is feasible.

Data Inventory and Operational Assessment

Effective transitions begin with a clear understanding of the existing environment. Teams map all datasets, integrations, study configurations, and downstream dependencies before any system change begins. This replaces reactive troubleshooting with proactive risk identification and prevents the kind of late-stage surprises that historically extended migration timelines.

Metadata Alignment Instead of Manual Rebuilds

Rather than recreating studies from scratch in the new environment, modern approaches map existing study structures such as forms, edit checks, roles, and workflows into the new platform. This translation preserves study logic while significantly reducing build time and avoiding the introduction of new inconsistencies into ongoing data collection.

Parallel Validation

During the validation phase, both the legacy and new systems operate simultaneously. Outputs are compared to confirm that the new environment produces consistent, equivalent results. This approach maintains auditability and gives clinical, data management, and regulatory stakeholders the evidence they need to accept the transition with confidence. Active trials continue uninterrupted during this period.

Phased Portfolio Rollout

Rather than moving all studies at once, deployment begins with new or lower-risk studies. As teams gain familiarity with the platform and integrations stabilize, the rollout extends to active programs. This staged approach allows for process refinement and progressive training without overwhelming sites or internal teams.

Activation of AI-Advanced Capabilities

A meaningful difference between legacy migrations and modern transitions is what happens after the technical move is complete. Organizations moving to current platforms have the opportunity to activate AI-supported workflows, unified data environments, and automated review processes that were not available in their previous system. The transition is not just a change of platform; it is an upgrade in how data is managed and acted upon.

A well-executed transition preserves everything that exists from audit trails, data lineage, and validated states, while opening access to capabilities the previous system could not support.

What to Look for When Selecting a New EDC System

Once an organization has determined that a transition is warranted, the EDC platform selection becomes the central decision. The goal is not to replicate current workflows in a new interface. It is to choose a system that can support how trials are designed and executed today, and adapt to how they will be designed and executed in the years ahead.

Configurability Without Heavy Customization

A modern EDC should be able to support diverse study designs — adaptive protocols, decentralized elements, hybrid data collection — without requiring bespoke programming for each variation. Configurability should be accessible to trained study builders, not reserved for development teams.

Native Integration with the Broader Trial Ecosystem

The EDC does not function in isolation. It must connect reliably with RTSM, ePRO platforms, central labs, safety systems, and imaging repositories. Native integrations, those built into the platform rather than assembled through custom middleware, reduce maintenance burden and minimize reconciliation risk.

Standardization and Reuse Across Studies

Platforms that support global libraries of forms, edit checks, and coding conventions allow teams to build on validated structures rather than starting from scratch with each protocol. CDISC-aligned outputs reduce the downstream effort required to prepare submission-ready datasets.

Scalability Without Proportional Resource Growth

The platform should allow teams to manage a growing study portfolio without a corresponding increase in headcount. Automation, reuse, and intelligent review workflows are the mechanisms that enable this. If managing ten studies requires ten times the effort of managing one, the platform has not delivered on its scalability promise.

The Role of AI in Platform Evaluation

AI-supported capabilities have moved from an aspirational feature to a practical differentiator in EDC selection. The most meaningful applications are those that reduce manual review burden and allow teams to direct their attention toward decisions rather than data aggregation.

In current platforms, AI supports early detection of data anomalies before they become protocol deviations, risk-based prioritization of queries so teams address the highest-impact issues first, assisted medical coding and classification, and pattern recognition across sites and subjects that would not be visible in manual review. These capabilities do not replace clinical or data management expertise. They allow that expertise to be applied where it matters most.

Capability | Legacy EDC | AI-Enabled EDC |

Data Review | Manual, retrospective | Continuous, automated assistance |

Reconciliation | Resource-intensive, fragmented | Unified and dynamic |

Query Management | Volume-driven triage | Risk-prioritized workflows |

Insight Access | Programmer-dependent | Direct, user-driven |

Scalability | Linear with headcount | Scales with study complexity |

Preparing the Organization for the new EDC System

Technology transitions in clinical operations succeed or fail based on organizational readiness as much as on the platform itself. A well-configured system that teams do not trust, do not understand, or actively work around will not deliver on its potential. Preparing the organization is not a secondary consideration; it is core to the investment.

Aligning Stakeholders Early

Switching to a new EDC system impacts clinical, data management, IT, biostatistics, regulatory, and site teams alike. Aligning these groups early ensures shared understanding of the rationale, scope, and timeline, reducing friction later in the transition.

Eliminating Workarounds Rather Than Replicating Them

Transitions often fail when legacy processes are copied into the new system. Many of those workflows existed only to compensate for prior limitations. Identifying and removing them is essential to realizing the value of the move.

Reframing Training Around Outcomes

Training should explain how work is changing, not just how to use the interface. When users understand the purpose behind new workflows, adoption is faster and more consistent.

Redefining Roles as Automation Increases

With automation reducing manual reconciliation and review, teams shift toward oversight and decision support. Acknowledging this change helps organizations adapt more effectively.

Data Integrity and Compliance During the EDC Transition

For sponsors and CROs operating under regulatory scrutiny, the most significant concern about switching EDC systems mid-program is audit exposure. Any gap in data traceability, any question about validated state, or any inconsistency between legacy and new system outputs can create complications that extend well beyond the transition itself.

Modern transition methodologies are designed specifically to address these concerns.

Regulatory Concern | Traditional Migration Risk | Modern Approach |

Data Traceability | Manual recreation risks gaps in lineage | Structured mapping preserves full data lineage from source to submission |

System Validation | Full revalidation often required regardless of scope | Risk-based validation focuses effort on areas of genuine change |

Audit Readiness | Documentation fragmented across legacy and new environments | Automated validation records and logs maintained throughout |

Study Continuity | Active studies may be paused during transition windows | Parallel environments allow ongoing data collection without interruption |

Amendment Traceability | Protocol changes hard to trace across migrated data | Metadata alignment preserves amendment history and downstream impact |

Measuring Success after Switching to a New EDC System

Each stakeholder evaluates the switch differently, and the business case needs to speak to each set of concerns without becoming a different document for each audience.

Quantifying the Cost of Inaction

The financial case for switching typically becomes clearer when cost drivers are evaluated holistically, across studies and over time, rather than study by study. Extended database build timelines delay first patient visits. Manual reconciliation inflates operational labor. Late-stage data cleaning compresses submission windows and requires resources at the worst possible moment. Fragmented validation tools increase audit preparation costs. Each of these is a real, measurable impact that can be traced back to EDC limitations.

When organizations map these costs against the investment required to transition, the question often shifts from whether they can afford to switch, to whether they can afford not to.

| Operational Area | Legacy System Impact | After Transition |

| Study Build | 8–12 week extended timelines due to sequential approvals | Faster configuration with reusable validated libraries |

| Data Cleaning | Retrospective, resource-heavy correction cycles late in the study | Continuous review model reduces late-stage burden significantly |

| Integrations | Custom-built, fragile connections requiring ongoing maintenance | Native ecosystem connections with reduced reconciliation overhead |

| Protocol Amendments | Up to 40% of DM and stats effort consumed per amendment cycle | Streamlined reconfiguration with reduced validation overhead |

| Portfolio Growth | Linear resource growth with each additional study | Scalable execution without proportional cost increase |

Measuring Impact After Go-Live

The same operational areas that justify the investment also serve as the indicators of success after transition. Rather than measuring adoption such as how many users have logged in, how many studies have been migrated, organizations are better served by tracking performance against the specific pain points that drove the decision.

Study build speed, query resolution cycle times, reconciliation effort per study, database lock predictability, and cross-functional data visibility are all measurable, and all directly connected to the core value proposition of a modern EDC platform. Establishing baselines before the transition and tracking these indicators at 90-day intervals post-launch gives leadership clear, attributable evidence of impact.

AI capabilities strengthen these gains over time. Rather than reacting to data quality issues late in the study cycle, teams operating on modern platforms can intervene earlier, maintain cleaner datasets throughout, and approach database lock with greater confidence. The gains tend to compound: as teams become more proficient with AI-assisted review, the volume of issues that reach late-stage cleaning continues to decline.

The metrics that justified the switch are the same ones that should be tracked after it. If the transition worked, those numbers will move, and the organization will have the evidence to build on for the next decision

Closing Perspective

Switching to a new EDC system is a significant operational undertaking. It requires planning, stakeholder alignment, and a clear-eyed assessment of both the risks of change and the costs of inaction. But for organizations managing complex, multi-study portfolios under increasing regulatory and operational pressure, the question is rarely whether to make this move; it is when and how.

The organizations best positioned for the next decade of clinical development are those that treat the EDC not as passive infrastructure, but as an active contributor to trial execution, one that surfaces risk early, connects data across functions, and scales with the portfolio rather than against it. Modern platforms make that possible. The transition is the starting point.

Clinion EDC: An AI-Native Platform

Clinion EDC is designed as an AI-native platform, embedding intelligence from the start rather than adding it later. With automated CDASH mapping, AI-driven data review and reporting, rSDV, fast study setups, and mid-study changes with zero downtime, it simplifies trial execution while reducing time and cost. The platform supports studies seamlessly from build to close, helping teams focus on outcomes instead of system limitations.

Abriti Rai writes on the intersection of AI, automation, and clinical research. At Clinion, she develops content that simplifies complex innovations and highlights how technology is shaping the next generation of data-driven clinical trials.

FAQS

Frequently Asked Questions

Switching mid-study raises concerns around data traceability, validation continuity, and site disruption. Modern migrations address this through metadata mapping, parallel validation environments, and controlled rollout strategies that allow studies to continue without downtime.

Timelines vary by portfolio size, but structured migrations today focus on configuration mapping rather than rebuilding, reducing transition time significantly compared to traditional reimplementation approaches.

Yes. With validated transfer methodologies, full audit trails, lineage preservation, and risk-based validation, regulators can trace every data point from source to submission, even after migration.

AI-enabled EDCs reduce manual reconciliation, identify anomalies earlier, automate SDV workflows, and streamline database builds. This shifts teams from reactive data cleaning to continuous quality oversight, accelerating database lock readiness.

Key factors include configurability without programming, native integrations, scalability across multiple trials, standardized libraries, and embedded automation that reduces operational dependency on external tools.

Yes, but effective transitions focus on workflow transformation rather than software navigation. Training aligns teams to new efficiencies instead of replicating legacy habits.

When executed properly, inspections are not negatively impacted. Structured documentation, validation logs, and preserved metadata ensure audit readiness throughout and after migration.

Organizations typically see faster study startup, reduced manual review effort, fewer reconciliation cycles, improved data visibility, and scalable trial execution without proportional headcount increases.

Still have questions?

Explore how Clinion AI can accelerate your trial – reach out to our team.

Unlock the Future of Clinical Trials with Clinion.

Cut your trial costs by 35% and accelerate your time-to-market by 30%

Compliance

Fully Compliant with Global Standards